Franco-Moroccan Research Center Summer School : Sustainable AI for Resource-Constrained Healthcare, from 7 to 11 July 2025

View

« La Science taille XX elles » met à l’honneur deux scientifiques de l’ISIR

View

The 10th IFAC Symposium on Mechatronic Systems and the 14th IFAC Symposium on Robotics from 15 to 18 July 2025

View

Retour sur la Journée des jeunes scientifiques de l’ISIR

View

Traduire le toucher affectif en sons

ViewDécouvrir l’ISIR

Nos équipes regroupent des chercheuses et chercheurs de disciplines variées, autour d’objets, d’applications et de questions scientifiques partagés relevant des deux défis majeurs de la robotique : l’interactivité avec les personnes humaines et l’autonomie.

L’avènement des intelligences artificielles et des robots induit des transformations profondes dans nos sociétés. Les chercheuses et chercheurs de l’ISIR contribuent à les anticiper en travaillant sur l’autonomie des machines et leur capacité à interagir avec les êtres humains.

Découvrez toutes les offres d’emploi, de stage, de doctorat et de post-doctorat de l’Institut des Systèmes Intelligents et de Robotique.

En ce moment

Actualités |

« La Science taille XX elles » met à l’honneur deux scientifiques de l’ISIR

Les délégations Paris-Centre et Villejuif du CNRS, en partenariat avec l’association Femmes & Sciences, présentent l’édition 2025 de l’exposition temporaire « La Science taille XX elles ». Parmi les lauréates de cette nouvelle édition, deux chercheuses sont issues de l’ISIR. Le 23 juin dernier, les délégations du CNRS Paris-Centre et Île-de-France…

Actualités |

Franco-Moroccan Research Center Summer School : Sustainable AI for Resource-Constrained Healthcare, from 7 to 11 July 2025

The Franco-Moroccan Research Center Summer School will be held at SCAI, Sorbonne University, Paris, from 7 to 11 July 2025. The new Franco-Moroccan Research Center Summer School offers immersive, interdisciplinary training for students and researchers from France and Morocco. The theme for 2025 is Sustainable AI for Resource-Constrained Healthcare. From…

Actualités |

9th Summer School on Computational Interaction from 16 to 20 June 2025

The 9th Summer School on Computational Interaction, ACM Europe School will be held at Sorbonne Université (Campus Pierre et Marie Curie) on June 16 – 20, 2025. This summer school teaches HCI students, researchers, and industry professionals computational methods and their application in user interface design, interactive systems, user modeling, and more….

Actualités |

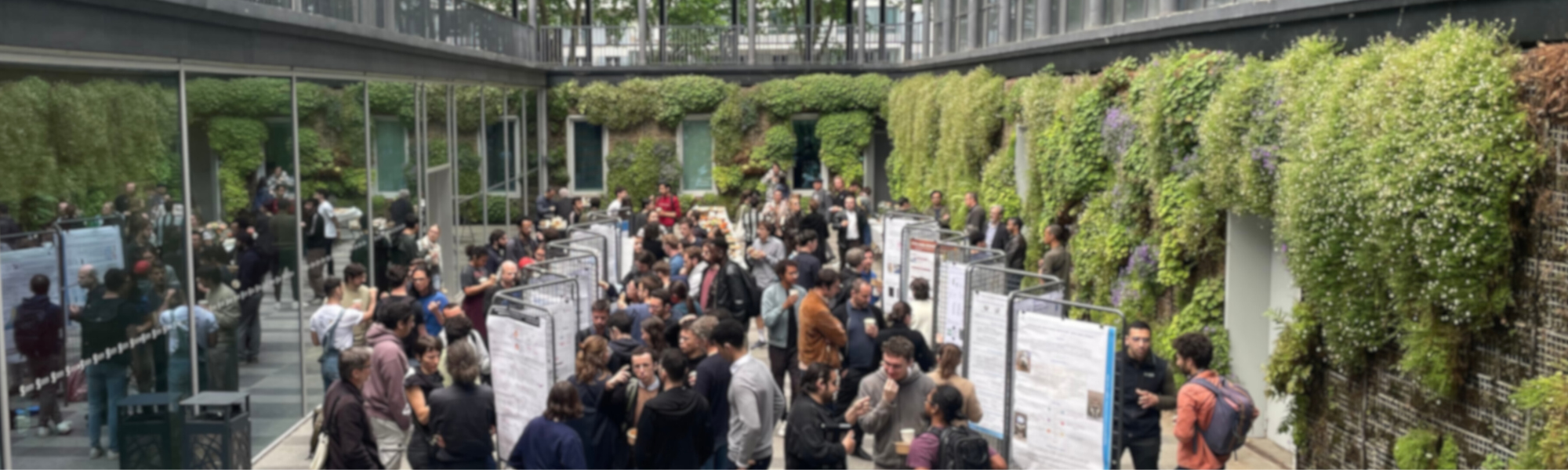

Retour sur la Journée des jeunes scientifiques de l’ISIR

Le lundi 26 mai 2025 s’est tenue la Journée des jeunes scientifiques de l’ISIR, un temps fort dédié aux doctorant-es, postdoctorant-es et ingénieur-es. Au cours de la journée, des présentations orales ont permis aux doctorant-es de partager leurs travaux, complétées par une session posters ouverte à l’ensemble des membres…

Actualités |

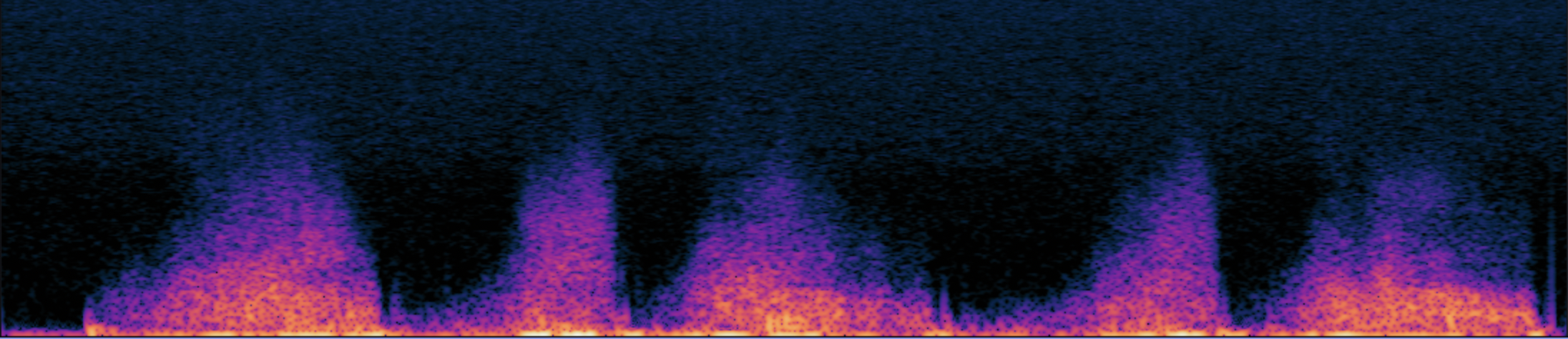

Traduire le toucher affectif en sons

À l’heure où les interactions à distance se généralisent, que ce soit en raison de l’essor du numérique, de la réalité virtuelle ou des périodes de distanciation sociale, une question persiste : comment maintenir un lien affectif sans contact physique ? Le toucher social, pourtant fondamental pour le bien-être psychologique et…

Actualités |

Vers une nouvelle génération d’interfaces haptiques multisensorielles

Alors que les interfaces numériques offrent une qualité croissante dans les domaines visuel et auditif, la dimension tactile reste encore largement sous-exploitée. Le projet MAPTICS s’inscrit dans ce constat, avec pour ambition de repenser le rôle du toucher dans nos interactions avec les écrans. Les interfaces humain-machine actuelles fournissent…

Actualités |

The 10th IFAC Symposium on Mechatronic Systems and the 14th IFAC Symposium on Robotics from 15 to 18 July 2025

The joint Symposia of the 10th IFAC Symposium on Mechatronic Systems (MECHATRONICS 2025) and the 14th IFAC Symposium on Robotics (ROBOTICS 2025) will be held in Paris from 15 to 18 July 2025. This unique event provides an exceptional opportunity for researchers, engineers, students, professionals, and experts in the fields of mechatronics,…

Actualités |

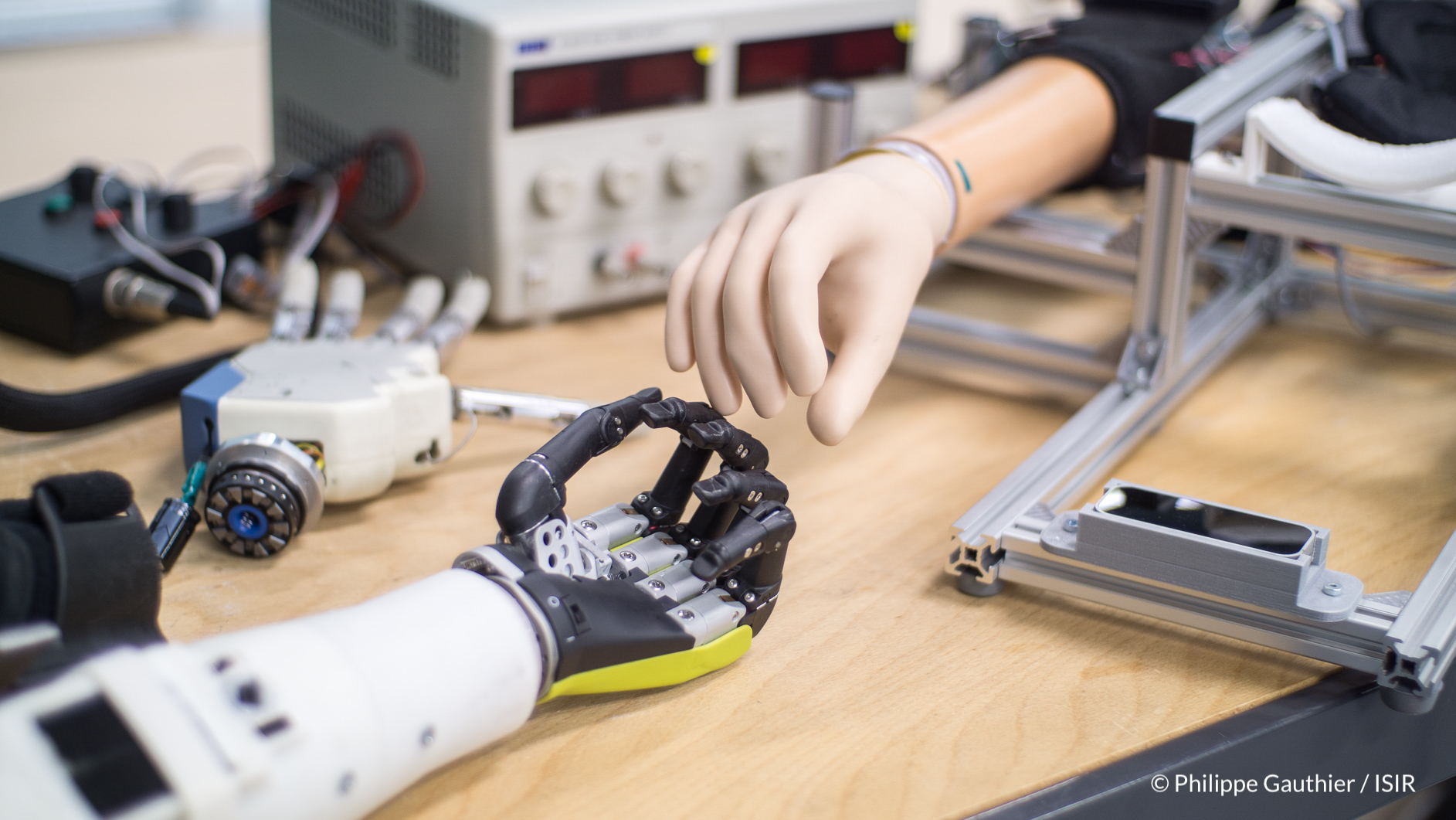

EXTENDER : piloter un bras robotique pour développer l’autonomie des personnes en situation de handicap

Source de l’article : CNRS Le projet EXTENDER a pour objectif de faciliter le quotidien des personnes en situation de handicap en développant de nouveaux modes de commande de bras robotique, adaptables à chaque utilisateur. Les sept partenaires impliqués entendent ainsi s’appuyer sur la technologie et la recherche pour…

Actualités |

Projection et débat : « Les scandaleuses » de Cécile Delarue

La cellule égalité-parité de l’ISIR vous invite à la projection du documentaire « Les Scandaleuses », de Cécile Delarue, qui retrace soixante-dix ans de luttes à travers des portraits de femmes qui ont osé défier les normes, souvent au prix du scandale. La projection sera suivie d’un échange avec la réalisatrice Cécile Delarue….

Actualités |

Améliorer l’interaction humain-agent pour un dialogue plus engageant

Les recherches de Catherine Pelachaud se concentrent sur l’amélioration des interactions humain-agent en développant des agents virtuels capables de communiquer de manière plus fluide et interactive. En explorant des approches innovantes comme l’adaptation en temps réel et le toucher social, ses travaux visent à rendre les dialogues plus personnalisés…