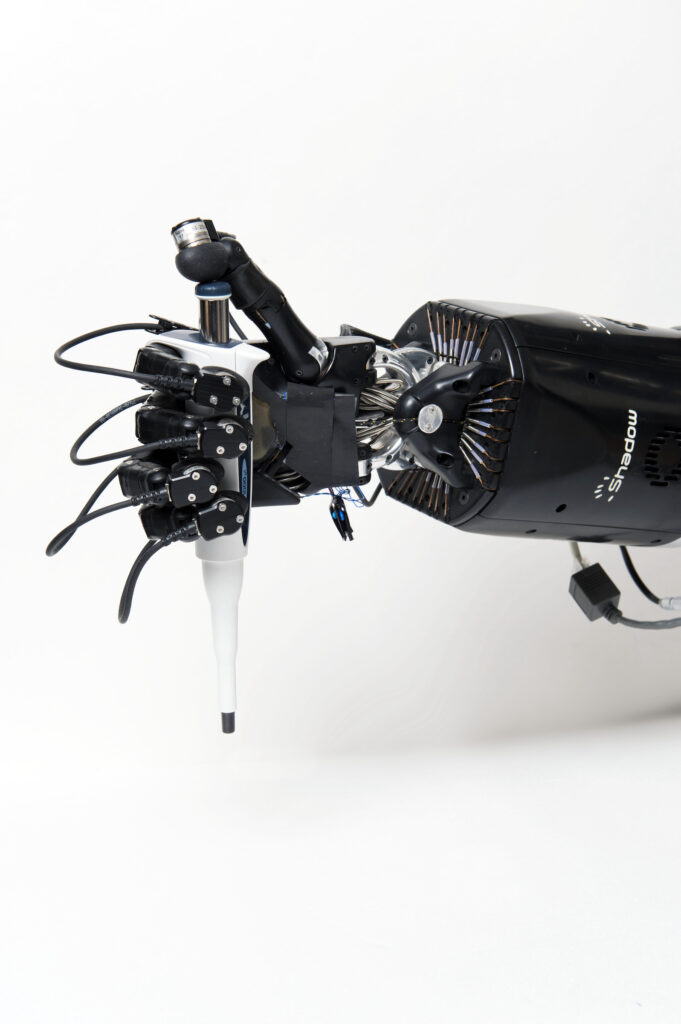

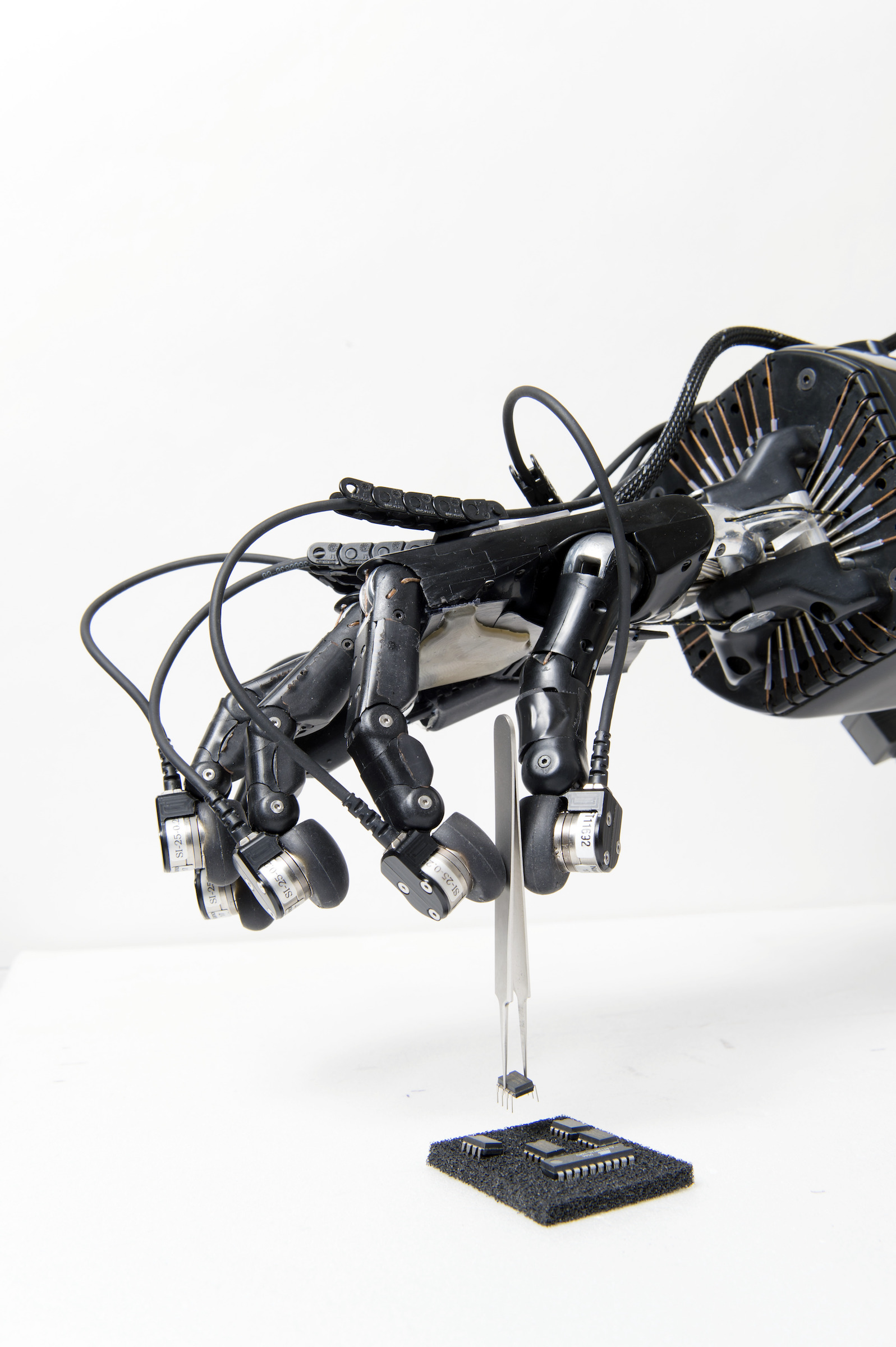

Project INDEX: Robot In-hand Dexterous manipulation by extracting data from human manipulation of objects to improve robotic autonomy and dexterity

The InDex project aims to understand how humans perform in-hand object manipulation and to replicate the observed skilled movements with dexterous artificial hands, merging the concepts of reinforcement and transfer learning to generalise in-hand skills for multiple objects and tasks. In addition, an abstraction and representation of previous knowledge will be fundamental for the reproducibility of learned skills to different hardware. Learning will use data across multiple modalities that will be collected, annotated and assembled into a large dataset. The data and our methods will be shared with the wider research community to allow testing against benchmarks and reproduction of results.

Context

Humans excel when dealing with everyday objects and manipulation tasks, learning new skills, and adapting to different or complex environments. This is a basic skill for our survival as well as a key feature in our world of artefacts and human-made devices. Our expert ability to use our hands results from a lifetime of learning by both observing other skilled humans and ourselves as we discover how to handle objects first-hand. Unfortunately, today’s robotic hands are still unable to achieve such a high level of dexterity in comparison to humans nor are systems entirely able to understand their own potential.

Objectives

In order for robots to truly operate in a human world and fulfil the expectations as intelligent assistants, they must be able to manipulate a wide variety of unknown objects by mastering their capabilities of strength, finesse and subtlety. To achieve such dexterity with robotic hands, cognitive capacity is needed to deal with uncertainties in the real world and to generalise previously learned skills to new objects and tasks. Furthermore, we assert that the complexity of programming must be greatly reduced, and robot autonomy must become much more natural.

The core objectives are:

– to build a multi-modal artificial perception architecture that extracts data of object manipulation by humans;

– the creation of a multimodal dataset of in-hand manipulation tasks such as regrasping, reorienting and finely repositioning;

– the development of an advanced object modelling and recognition system, including the characterisation of object affordances and grasping properties, in order to encapsulate both explicit information and possible implicit object usages;

– to autonomously learn and precisely imitate human strategies in handling tasks;

– and to build a bridge between observation and execution, allowing deployment that is independent of the robot architecture.

The goals for the ISIR team are to design:

– a real-time planner for robotic grasp and in-hand manipulation,

– optimal feedback control to determine the intermediate states that yield the best possible performance for the transition from an initial state to the next desired state,

– multi-modal low-level controllers that make the artificial hand handle the targeted object taking into consideration that robot system needs various and different sources of information during the sequence action which yields to a complex command structure,

– grasp adjustments, holding an object within a hand requires that the fingers maintain the object firmly without any slippage and resist to bound external disturbances. In other words, the grasp must be stable.

Results

Robotic in-hand manipulation is based on a combination of human motion primitives. Motion primitives have been extracted and tested to be efficient in re-creating paths. The real time path planner design is on the way that combine motion primitives under robotic in-hand manipulation constraints to generate the paths.

Partnerships and collaborations

This work is supported by the European project InDex under the CHIST-ERA framework, call Topic: Object recognition and manipulation by robots: Data sharing and experiment reproducibility (ORMR) and received funding from the Italian Ministry of Education and Research (MIUR), Austrian Science Fund (FWF) under grant agreement No. I3969-N30, Engineering and Physical Sciences Research Council (EPSRC-UK) with reference EP/S032355/1 and Agence Nationale de la Recherche (ANR) under grant agreement No. ANR-18-CHR3-0004.

Project partners are:

– Aston University – United Kingdom (coordinator),

– Technical University Wien – Austria,

– University of Tartu – Estonia,

– Sorbonne Université – France,

– University of Genoa – Italy